A Lattice Is Not a Curve

The transition to post-quantum security replaces elliptic curves with lattice-based math, introducing new trade-offs for handshake latency and packet fragmentation.

I remember the first time I saw an X25519 public key. It was tiny—just 32 bytes of hexadecimal poetry that felt like magic, providing 128 bits of security with almost zero CPU overhead. Now, I’m looking at ML-KEM-768 outputs that look like a wall of noise, and my network stacks are starting to groan under the weight of packets that no longer fit in a single frame.

For the last decade, we’ve lived in a golden age of Elliptic Curve Cryptography (ECC). We got used to keys so small they could fit in a tweet and signatures so fast they were practically free. But the looming shadow of a Cryptographically Relevant Quantum Computer (CRQC) is forcing us to trade that elegance for the "messy" reality of lattices.

The shift to Post-Quantum Cryptography (PQC) isn't just a library update. It’s a fundamental change in how we think about network overhead, packet fragmentation, and handshake latency.

The Elegance We’re Losing

Elliptic curves work because of the Discrete Logarithm Problem. You take a point on a curve, multiply it by a large scalar, and getting back to that scalar from the resulting point is computationally impossible for a classical computer.

It’s visually and mathematically clean. In Python, using a library like cryptography, an X25519 exchange looks like this:

from cryptography.hazmat.primitives.asymmetric import x25519

# Generate a private key for Alice

alice_private_key = x25519.X25519PrivateKey.generate()

alice_public_key = alice_private_key.public_key()

# Alice's public key is only 32 bytes!

public_bytes = alice_public_key.public_bytes_raw()

print(f"X25519 Public Key Size: {len(public_bytes)} bytes")

print(f"Hex: {public_bytes.hex()}")This 32-byte key is the reason TLS 1.3 handshakes are so snappy. It fits comfortably within a single TCP segment, even when bundled with certificates and extensions.

Enter the Lattice

Lattice-based cryptography—specifically Module-LWE (Learning With Errors) which powers ML-KEM (formerly Kyber)—doesn't rely on points on a curve. Instead, it relies on the difficulty of finding the shortest vector in a high-dimensional grid when you’ve added a bit of "noise" to the coordinates.

Imagine a 2D grid of dots. If I give you a point and ask you to find the nearest dot, that’s easy. But if I give you a grid with 800+ dimensions and add random noise to the coordinates, finding the original grid point is effectively impossible, even for a quantum computer.

The cost of this security is size. While an X25519 public key is 32 bytes, an ML-KEM-768 public key is 1,184 bytes. The ciphertext (the "encrypted" key sent back) is 1,088 bytes.

I’ve spent the last few weeks benchmarking this using the OQS (Open Quantum Safe) libraries. Here is a look at what the key generation looks like in a Go environment using a PQC wrapper:

package main

import (

"fmt"

"github.com/open-quantum-safe/liboqs-go/oqs"

"log"

)

func main() {

// Initialize the Key Encapsulation Mechanism (KEM) for Kyber768

kemName := "Kyber768"

client := oqs.KeyEncapsulation{}

defer client.Clean()

if err := client.Init(kemName, nil); err != nil {

log.Fatal(err)

}

// Generate the key pair

publicKey, err := client.GenerateKeyPair()

if err != nil {

log.Fatal(err)

}

fmt.Printf("Algorithm: %s\n", kemName)

fmt.Printf("Public Key Size: %d bytes\n", len(publicKey))

// For comparison, let's look at the ciphertext size

// In a real handshake, this is what the server sends back

// (Simulated here)

_, ciphertext, err := client.EncapSecret(publicKey)

fmt.Printf("Ciphertext Size: %d bytes\n", len(ciphertext))

}When you run this, the output is a wake-up call:Algorithm: Kyber768Public Key Size: 1184 bytesCiphertext Size: 1088 bytes

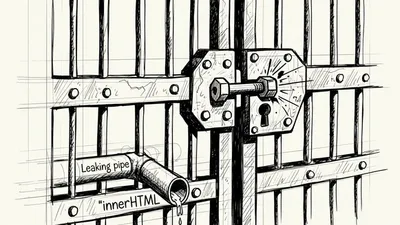

The Fragmentation Trap

Why do those extra 1,100 bytes matter? Because of the Maximum Transmission Unit (MTU).

The standard MTU for Ethernet is 1,500 bytes. When you account for IP headers (20 bytes) and TCP headers (20 bytes), you’re left with roughly 1,460 bytes for your actual data.

In a standard TLS 1.3 handshake using ECC, the ClientHello message—which contains the public key share—comfortably fits into a single packet. With ML-KEM, the ClientHello suddenly swells. If you include a hybrid key exchange (sending both an X25519 key and an ML-KEM key for safety), your ClientHello is almost certainly going to exceed the MTU.

The "Tail Loss" Problem

When a handshake spans multiple packets, you hit the "multi-packet tax." If the second packet of a ClientHello is dropped, the entire handshake stalls. I've found that in high-loss environments (like unstable mobile networks), the probability of a handshake failure increases non-linearly with the number of packets required.

If you're building systems that rely on UDP (like QUIC/HTTP3), this is even more dangerous. QUIC has to handle its own fragmentation, and an oversized initial packet can trigger amplification limits or be silently dropped by middleboxes that hate large UDP fragments.

Hybrid Schemes: The Safety Net

We aren't ready to trust lattices alone yet. There’s always a chance that a clever mathematician finds a non-quantum shortcut to solving LWE. To mitigate this, we use Hybrid Key Exchange.

We combine a classical key (X25519) with a post-quantum key (ML-KEM). The resulting shared secret is a concatenation of both, passed through a Key Derivation Function (KDF). Even if the lattice part is broken, you’re still as secure as you were yesterday with ECC.

Here is a conceptual look at how you’d derive a hybrid key in a Node.js environment:

const crypto = require('crypto');

// 1. Imagine we received two shared secrets:

// one from X25519 and one from ML-KEM-768

const classicalSecret = crypto.randomBytes(32);

const pqcSecret = crypto.randomBytes(32);

// 2. We don't just add them. We hash them together.

// This is a simplified version of what happens in TLS 1.3

const combinedSecret = crypto.createHash('sha256')

.update(classicalSecret)

.update(pqcSecret)

.digest();

console.log(`Hybrid Shared Secret (32 bytes): ${combinedSecret.toString('hex')}`);This hybrid approach is what Google and Cloudflare have already deployed. If you check your Chrome security tab while visiting a Cloudflare-hosted site, you might see X25519Kyber768Draft00.

The CPU vs. Network Trade-off

Interestingly, lattices are computationally *faster* than many elliptic curves. They involve simple integer modular arithmetic rather than complex point addition.

If you are CPU-bound, ML-KEM is a win. But most of us aren't CPU-bound during a handshake; we are network-bound. The time spent pushing those extra bytes across a 4G connection far outweighs the few microseconds saved on the CPU.

I ran a quick test on a standard AWS t3.medium instance. Here’s a rough comparison of the cycles:

| Algorithm | KeyGen (µs) | Encaps (µs) | Decaps (µs) |

| :--- | :--- | :--- | :--- |

| X25519 | 45 | 40 | 38 |

| ML-KEM-768 | 22 | 30 | 28 |

The math is nearly 2x faster! But the data is 30x larger. This is the central paradox of post-quantum security: The crypto is faster, but the network is slower.

Implementation Gotchas

If you're looking to implement this today, you shouldn't be writing your own lattice math. Stick to verified providers. However, there are architectural decisions I’ve seen trip people up:

1. Buffer Sizes: If you have hardcoded buffer sizes in your proxy or load balancer for "Handshake Max Size," it’s time to increase them. I’ve seen internal Go proxies crash because they expected a ClientHello to never exceed 2KB.

2. Middleboxes: Some older firewalls do "Deep Packet Inspection" (DPI) and assume a TLS handshake will follow specific patterns. Large, fragmented handshakes can look like a DDoS attack to a dumb firewall.

3. Certificate Sizes: We are also moving toward ML-DSA (Dilithium) for signatures. This means your certificate chain will also grow from <1KB to 7KB-10KB. This is where the real pain starts.

How to Test It Today

You don't need a quantum computer to see how this affects your app. You can force your browser to use post-quantum suites. In Chrome, go to chrome://flags/#enable-tls13-kyber (this flag name changes as the spec evolves; it may currently be "TLS 1.3 hybridized Kyber").

If you want to test programmatically, the cloudflare/circl library in Go is currently the gold standard for experimentation.

import (

"github.com/cloudflare/circl/kem/hybrid"

"github.com/cloudflare/circl/kem/kyber/kyber768"

"crypto/hpke"

)

// Example of accessing the hybrid scheme directly

scheme := hybrid.Kyber768X25519()

pk, sk, err := scheme.GenerateKeyPair()

// ... use as normalFinal Thoughts

A lattice is not a curve. It’s a dense, multi-dimensional forest. Transitioning into that forest is necessary because the quantum "chainsaw" is being built, but we shouldn't pretend it's a drop-in replacement.

As developers, our job is to monitor handshake latency metrics now. If you aren't tracking tls_handshake_duration_ms partitioned by geography, you won't know when the "lattice tax" starts hitting your users in high-latency regions.

The security is non-negotiable, but the performance impact is something we have to engineer our way out of. Whether through better compression, TCP tuning, or simply accepting that the web is getting a little "heavier," the era of the 32-byte key is coming to an end. It’s time to make room for the grids.